Introduction

AI systems have evolved far beyond simple question-and-answer exchanges. Enterprises now expect AI to execute complex, multi-step workflows, make real-time decisions, and handle domain-specific tasks across regulated environments. This shift has exposed a critical gap: clever prompts alone cannot carry AI systems through real-world operational complexity.

The business case for context engineering is concrete. RAG-based context engineering reduces hallucinations by 55–100% depending on implementation quality. Yet enterprises without AI-ready data abandon 60% of projects before reaching production.

This article covers what context engineering actually is, why it directly affects accuracy, reliability, and deployment success, and what enterprises must get right to build AI systems that hold up under real operational demands.

TL;DR

- Context engineering designs the full information environment an AI receives—memory, tools, data sources, and instructions—not just individual prompts

- Where prompt engineering optimizes a single input, context engineering coordinates the full stack, enabling multi-step, stateful enterprise tasks

- Reduces hallucinations dramatically, enables scalable agentic workflows, and accelerates decision-making with measurable business impact

- Without proper context engineering, AI systems produce inconsistent outputs, fail to scale, and generate errors that compound over time

- Treat context engineering as core infrastructure—and AI performance improves measurably across every operation

What Is Context Engineering?

Context engineering is the systematic practice of designing, assembling, and managing all information an AI model receives—including instructions, memory, retrieved documents, user history, tool outputs, and business rules—to ensure accurate reasoning and action on any task.

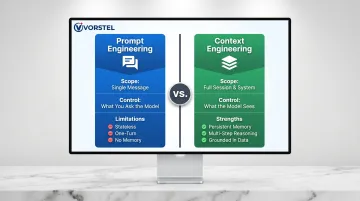

Anthropic defines context engineering as "the set of strategies for curating and maintaining the optimal set of tokens during LLM inference." The distinction from prompt engineering comes down to scope: prompt engineering operates at the message level, while context engineering operates at the session and system level.

Where Context Engineering Applies

Context engineering is essential in any system where an LLM must do more than answer a single question:

- Customer service agents handling multi-turn conversations with access to customer history and business policies

- Enterprise copilots navigating SAP, Salesforce, or ERP systems with real-time data integration

- Autonomous workflow systems managing procurement, onboarding, or compliance processes end-to-end

- Diagnostic tools in healthcare or finance requiring verified, up-to-date reference data

- AI-powered ERP/CRM integrations coordinating across multiple data sources and business systems

Context Engineering as the Production Reliability Layer

Context engineering functions as the infrastructure layer determining whether an AI system is dependable in production—much like solid data engineering makes machine learning models reliable in the field.

The practical difference between the two disciplines breaks down clearly:

| Discipline | Scope | Controls |

|---|---|---|

| Prompt Engineering | Single message | What you ask the model |

| Context Engineering | Full session & system | What the model sees |

Without well-designed context, even a precisely worded prompt hits a model operating with incomplete or misaligned information.

Key Advantages of Context Engineering

The advantages here are grounded in measurable, operational impact—the kind visible in error rates, deployment speed, decision quality, and business scalability—not just theoretical AI performance benchmarks.

Advantage 1: Significant Reduction in AI Hallucinations and Output Errors

Context engineering dramatically cuts AI hallucinations—instances where models confidently generate inaccurate or fabricated information—by ensuring the model reasons from verified, relevant, structured data rather than relying on parametric (baked-in) knowledge alone.

How It Works in Practice:

Techniques like Retrieval-Augmented Generation (RAG) pull verified documents, databases, or enterprise records into the model's context window at generation time. Responses are grounded in real, up-to-date information rather than trained assumptions.

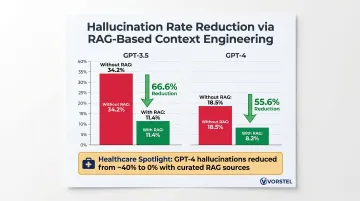

Research by Bechard & Ayala (2024) demonstrated RAG reduced GPT-3.5 hallucinations from 34.2% to 11.4% (a 66.6% relative reduction) and GPT-4 from 18.5% to 8.2% (55.6% reduction). In healthcare applications, RAG with curated sources reduced GPT-4 hallucinations from approximately 40% to 0%.

Why This Matters:

Hallucinations are one of the most cited barriers to enterprise AI adoption. In regulated sectors like healthcare, finance, and legal, a single inaccurate AI output can trigger compliance failures, financial loss, or reputational damage. Context engineering directly removes the root cause of most hallucinations.

McKinsey found 29% of organizations experienced negative consequences specifically from AI inaccuracy. Context engineering moves AI from "impressive demo" to "production-grade tool" by anchoring responses in verifiable data.

KPIs Impacted:

- Output accuracy rate

- Hallucination rate

- Error escalation frequency

- Customer complaint rate

- Compliance audit pass rate

When This Advantage Matters Most:

Highest-impact in industries with low tolerance for error—healthcare diagnostics, legal document analysis, financial advisory tools, enterprise compliance systems—and any scenario where AI outputs drive real-world decisions.

Advantage 2: Scalable AI Across Complex, Multi-Step Enterprise Workflows

Context engineering enables AI systems to sustain coherent, accurate behavior across long, multi-step processes—employee onboarding, procurement workflows, customer resolution journeys, ERP-integrated operations—where single prompts would lose context midway.

How It Works in Practice:

By architecting persistent memory, structured state-tracking, and modular context assembly, AI agents hand off information between steps, retain prior decisions, and dynamically retrieve relevant enterprise data at each stage—enabling genuine agentic behavior across distributed enterprise systems.

Why This Matters:

Most enterprise processes are not single-turn interactions. They involve sequential decisions, multiple data systems, and conditional logic. Prompt-only AI fails here because it is stateless and context-blind, while context-engineered AI maintains continuity across entire workflows.

Gartner predicts 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from less than 5% in 2025—an approximately 8x increase in one year.

Organizations implementing context engineering can automate multi-step processes end-to-end, reducing manual intervention, lowering operational costs, and accelerating cycle times.

KPIs Impacted:

- Process automation rate

- Task completion time

- Manual intervention frequency

- Cost per completed workflow

- AI deployment cycle length

When This Advantage Matters Most:

This advantage is highest-impact at enterprise scale—particularly for organizations running complex SAP or Salesforce ecosystems, high-volume operations, or cross-functional AI agents navigating multiple systems and data sources simultaneously. For enterprises at this stage, partnering with an AI innovation consulting firm like Vorstel Technologies can accelerate architecture and deployment of context engineering infrastructure across these workflows.

Advantage 3: Faster, More Reliable Decision-Making Through Contextual Intelligence

Context engineering equips AI systems to surface the right insight at the right moment—drawing on user history, business rules, live data feeds, and enterprise knowledge bases—rather than offering generic responses requiring human filtering before action.

How It Works in Practice:

Through dynamic context assembly, AI systems adapt responses based on who is asking, what they've done before, what business policy says, and what current data shows—enabling personalized decision support that reaches the right person without manual filtering.

Why This Matters:

Decision latency is a measurable cost in enterprise environments—the longer the right insight takes to reach the right person, the more opportunities are lost. Context-engineered AI collapses that latency by pre-assembling decision-relevant information before it's needed.

Microsoft and Accenture's deployment showed 97% of employees completing routine tasks 15 times faster with context-aware Copilot, while AI-powered sales intelligence generated 43% more sales opportunities by reasoning over SEC filings, industry context, and business data in seconds rather than days.

Organizations deploying context-aware AI copilots in sales, operations, or customer success respond faster, personalize at scale, and surface risks before they escalate.

KPIs Impacted:

- Decision cycle time

- AI-assisted recommendation acceptance rate

- Customer response time

- Risk identification speed

- Revenue influenced by AI-assisted decisions

When This Advantage Matters Most:

Most critical in fast-moving environments—e-commerce, retail operations, executive decision dashboards—where the gap between insight and action directly impacts business outcomes.

What Happens When Context Engineering Is Missing or Ignored

Enterprises deploying AI systems without deliberate context engineering face predictable, costly consequences. These aren't edge cases — they're patterns that compound as deployments scale.

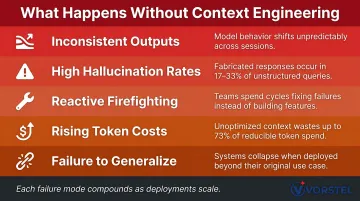

- Inconsistent outputs — Without structured context, model responses drift based on minor prompt variations, giving contradictory answers to similar questions and eroding user trust.

- High hallucination rates — Stanford RegLab found that even RAG-equipped commercial legal AI tools hallucinate 17–33% of the time, creating real liability in regulated workflows.

- Reactive firefighting — Teams spend more time correcting AI errors than acting on AI output, reversing the productivity gains the system was meant to deliver.

- Rising token costs — Ungoverned context causes prompt bloat and compounding maintenance overhead; Redis benchmarks show RAG architectures can cut costs by 73% versus naive long-context approaches.

- Failure to generalize — AI that performs well in controlled demos breaks under real operational volume or across departments. McKinsey reports nearly two-thirds of organizations haven't begun scaling AI enterprise-wide — and the pilot-to-production gap is fundamentally a context and data readiness problem.

Each of these failure modes is avoidable. The common thread is the absence of intentional design around what the model knows, when it knows it, and how that knowledge is structured — which is precisely what context engineering addresses.

How to Get the Most Value from Context Engineering

Context engineering delivers greater returns when treated as an architectural discipline from the start, not a patch applied after problems emerge. Three practices determine how much value organizations actually capture:

- Standardize context design across all AI touchpoints — from customer-facing agents to internal operations tools. Output quality should be uniform and predictable regardless of which team or system is involved.

- Track KPIs tied to context quality on a regular cadence: hallucination rates, task completion accuracy, and decision acceptance rates. Use these signals to refine context architectures over time. Organizations with successful AI initiatives invest up to four times more in data and analytics foundations than peers.

- Close the feedback loop — data generated by context-aware AI systems is itself a strategic asset. Feed observed outcomes back into context design to create a continuous improvement cycle rather than a one-time implementation.

For organizations looking to build this capability without starting from scratch, Vorstel Technologies integrates context engineering directly into existing digital transformation programs — at any stage of the journey — so enterprises can act on these principles without rebuilding from the ground up.

Conclusion

Context engineering determines what information AI acts on, how it reasons, and whether its behavior holds up across real-world deployments. Prompt engineering shapes individual interactions; context engineering shapes outcomes at scale.

The advantages—from reduced hallucinations to scalable agentic workflows to sharper decision intelligence—compound as context engineering matures within an organization. Early investment builds a capability that grows harder for competitors to replicate over time.

That compounding effect only holds when context engineering is treated as an ongoing practice, one that evolves alongside AI systems, changing data landscapes, and new business requirements. Organizations that commit to this discipline consistently extract more measurable, durable value from their AI investments than those chasing isolated prompt-level fixes.

Frequently Asked Questions

What is the difference between context engineering and prompt engineering?

Prompt engineering focuses on optimizing a single input to get a desired AI output, while context engineering designs the entire information environment the AI operates within—including memory, retrieved data, tools, and business rules—enabling reliable, scalable performance across complex tasks.

Why does context engineering matter for enterprise AI systems?

Enterprise AI systems operate across multi-step workflows, regulated environments, and high-stakes decisions. Context engineering ensures AI outputs are accurate, consistent, and grounded in real business data rather than generic model assumptions, which is what separates reliable production AI from brittle prototypes.

How does context engineering reduce AI hallucinations?

Hallucinations occur when models lack verified information to reason from. Context engineering fixes this by supplying structured, relevant data at generation time—through techniques like RAG—so responses reflect facts, not guesswork.

What are the key components of a well-engineered AI context?

A well-engineered context typically includes:

- System instructions and business rules

- User history and memory

- Dynamically retrieved documents or database records

- Tool outputs and API responses

Together, these form the complete information environment the model reasons from.

What happens if context engineering is skipped during AI deployment?

Skipping context engineering leads to inconsistent and unreliable outputs, high error and hallucination rates, inability to scale across departments or workflows, and increasing maintenance costs—all of which undermine AI ROI over time.

Is context engineering only relevant for large enterprises, or can startups benefit too?

Large enterprises feel the impact most acutely, but startups building AI-native products benefit too. Getting context engineering right from the start prevents costly rearchitecting later and makes AI features more reliable from day one.